WHAT’S HAPPENED?

Over the past year, the music industry has watched with alarm as one deepfake of popular artists after another has gone viral.

Arguably the most famous example to date arrived almost exactly a year ago: the “fake Drake” track from ‘Ghostwriter’, an AI-generated song titled Heart On My Sleeve featuring vocals mimicking Universal Music Group-signed artists Drake and The Weeknd.

Movie stars have also been hit. This past October, Tom Hanks warned on social media that an ad purportedly featuring him promoting a dental plan was a fake.

And beyond the damage being done to celebrities and IP-centered industries, deepfakes are beginning to take a toll on the broader community: last year, police in New Jersey launched an investigation into allegations that students at a high school had used AI to generate fake pornography featuring the likenesses of their underage classmates.

Now, US lawmakers are beginning to react, tabling legislation that has garnered plaudits from rightsholders – and some criticism from certain corners, including concerns about what these new laws could allow record companies and movie studios to do with the likenesses and voices of popular artists and actors (more on that below).

In January, the US’s federal legislature took one large step towards addressing the deepfake problem, with the introduction in the US House of Representatives of the No AI FRAUD Act.

The bill is designed to give people legal recourse if their image or voice is used in a deepfake they didn’t agree to – but, notably for the entertainment industry, it also enshrines into law rules for the commercial exploitation of deepfakes.

Short for “No Artificial Intelligence Fake Replicas And Unauthorized Duplications,” the No AI FRAUD Act does something that many in the music industry have been calling for, for some time – it effectively enshrines a right of publicity in US federal law.

A right of publicity is “an intellectual property right that protects against the misappropriation of a person’s name, likeness, or other indicia of personal identity… for commercial benefit,” in the words of the International Trademark Association.

Thus far, a right of publicity hadn’t been formally enshrined in US federal law, leaving it to individual states to legislate the matter. Only 19 of 50 US states actually have a law explicitly guaranteeing a right of publicity, including California, Florida and New York, while another 11 states have recognized the right as a matter of common law.

And the new bill before Congress doesn’t entirely do the job either – there are aspects of a right of publicity that it doesn’t cover, such as nicknames, pseudonyms and signatures. But the proposed law does cover image and voice, and those are the two key elements that are typically violated by AI-generated deepfakes.

A few months earlier, a similar bill was tabled in the US Senate the form of a “discussion draft.” Titled the Nurture Originals, Foster Art, and Keep Entertainment Safe (NO FAKES) Act, the bill aims to achieve many of the same things as the House’s No AI FRAUD Act.

“We embrace the use of AI to offer artists and fans new creative tools that support human creativity. But putting in place guardrails like the No AI FRAUD Act is a necessary step to protect individual rights, preserve and promote the creative arts, and ensure the integrity and trustworthiness of generative AI.”

Meanwhile, the state of Tennessee – home to the musical mecca known as Nashville – isn’t waiting for lawmakers in Washington to make the first move. Governor Bill Lee last month signed into law the bipartisan-supported Ensuring Likeness, Voice and Image Security (ELVIS) Act, which, among other things, updates the state’s existing right of publicity law to include voice, and expands its application beyond commercial uses.

Not surprisingly, the Tennessee law has gained support from many groups linked to the entertainment industry, including the Human Artistry Campaign, the Artist Rights Alliance and the American Association of Independent Music (A2IM).

For intellectual property-centered industries, such as music, movies, radio and TV, these new bills and/or laws are a big deal.

Numerous industry organizations – from the Recording Industry Association of America (RIAA) to the film, TV and radio artists’ union SAG-AFTRA – have thrown their support behind the No AI FRAUD Act as well.

“We embrace the use of AI to offer artists and fans new creative tools that support human creativity. But putting in place guardrails like the No AI FRAUD Act is a necessary step to protect individual rights, preserve and promote the creative arts, and ensure the integrity and trustworthiness of generative AI,” the RIAA said in a statement.

SO WHAT DO THE BILLs DO ABOUT IT?

The No AI FRAUD Act is a rare instance – these days, anyway – of bipartisan cooperation in the halls of Congress. It was sponsored by one Republican – Rep. Maria Salazar of Florida – and one Democrat, Rep. Madeleine Dean of Pennsylvania.

Co-sponsoring the bill are a handful of representatives from both sides of the aisle: Republican Reps. Nathaniel Moran (Texas) and Rob Wittman (Virginia), and Democratic Rep. Joe Morelle (New York).

In its first clause, the No AI FRAUD Act declares that “every person has a property right in their own likeness and voice.”

(You can read the bill in full here.)

Anyone who violates that right by creating and making available an unauthorized deepfake will be liable for damages of no less than $5,000 per violation. The Senate’s NO FAKES Act provides for similar penalties.

According to an analysis of the NO FAKES Act by law firm Locke Lord, the bill expands the existing common-law protection against the use of someone’s likeness. Currently, a person whose likeness has been violated can only sue if their likeness has a commercial value. That means those students in the New Jersey school whose likenesses appeared in AI-generated porn wouldn’t have standing for a lawsuit. But under the proposed new laws, they would be able to sue the creators of those images for $5,000 per infringement.

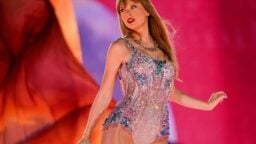

In the case of someone who makes a tool to be used to create deepfakes of a particular person, under the No AI FRAUD Act, they will be liable for damages of no less than $50,000 per violation. In other words, the bill creates a hefty penalty for anyone who wants to make a business out of making deepfakes of, say, Taylor Swift or Bad Bunny.

The penalties can run much higher if the aggrieved person can prove financial damage above those amounts, as would often be the case for a celebrity or popular musical artist.

The bill also specifically gives music recording companies the right to pursue legal action over deepfakes of artists with whom they have exclusive contracts.

“Every person has a property right in their own likeness and voice.”

No AI FRAUD Act

The No AI FRAUD Act also gets into some specifics about the right of publicity. The right to one’s likeness and voice is “exclusive” (no one else can make a claim on your likeness and voice) and “freely transferable and descendible,” that is, the right can be inherited after someone’s death, and it can be sold or licensed to other people or entities (good news for the music and movie/TV business).

Notably, the bill prohibits the sale of likeness/voice rights by people under the age of 18, unless a court approves such an agreement.

Interestingly, the bill also states that a contract to sell or license one’s image or voice is valid if “the terms of the agreement are governed by a collective bargaining agreement.”

This comes just months after SAG-AFTRA, which represents 160,000 movie, TV and radio artists, agreed with Hollywood studios on a deal governing the use of AI-generated images of real-life performers. Under that deal, studios that create AI-generated versions of an actor will have to get that actor’s consent, and will have to pay them for the days the actor would have spent filming or recording the performance if they had done it themselves.

However, some SAG-AFTRA members criticized the deal, noting there are exceptions to the consent and payment rules; for instance, if a studio uses AI to reshoot some part of a movie or show that had been shot with a real actor, evidently they won’t need to get permission or pay for that.

There are also exceptions for the use of AI clones in “comment, criticism, scholarship, satire or parody, a docudrama, or historical or biographical work” – a carve-out that has attracted criticism from some SAG-AFTRA members.

The No AI FRAUD Act appears to expressly validate deals like this as legal – perhaps explaining some of SAG-AFTRA’s enthusiasm for it.

What are the arguments against these bills?

One of the key concerns surrounding these bills, particularly the ones in Washington, centers around the potential for entities that license a person’s right of publicity (for instance, a recording company or a movie studio) to effectively gain the right to clone a famous person’s likeness after their death, allowing them to potentially use that likeness in ways the person would not have agreed to while alive, and take work away from living artists and performers.

The House’s No AI FRAUD Act proposes that a person’s right to their image or likeness can be terminated little more than a decade after their death, on proof that for a minimum of two years after 10 years past the person’s death, that person’s image wasn’t used for commercial purposes. However, if it was used commercially in that time, it seems the law would allow that right to continue to be in force for longer.

Meanwhile, the Senate’s NO FAKES Act – though it hasn’t been officially tabled as a bill – seems to go even further. According to Jennifer E. Rothman, a professor of law at the University of Pennsylvania, the NO FAKES Act would enshrine a “digital replica right” that would extend for 70 years after the death of the individual in question, i.e., the same length of time that a copyright endures in the US after the creator’s death.

“This opens the door for record labels to cheaply create AI-generated performances, including by dead celebrities, and exploit this lucrative option over more costly performances by living humans.”

Jennifer E. Rothman, University of Pennsylvania

“This opens the door for record labels to cheaply create AI-generated performances, including by dead celebrities, and exploit this lucrative option over more costly performances by living humans,” Rothman wrote in an analysis of the proposed law last October.

If adopted in its current proposed form, “this legislation would make things worse for living performers by (1) making it easier for them to lose control of their performance rights, (2) incentivizing the use of deceased performers, and (3) creating conflicts with existing state rights that already apply to both living and deceased individuals.”

If that is indeed the case with these bills, then groups like the Human Artistry Campaign (which seeks to preserve the role of real-life people in the arts in the age of AI) may want to hold off on giving them their support, as they did with Tennessee’s ELVIS Act.

In an analysis of Tennessee’s new law, Rothman argues that, in attempting to broaden the right of publicity to cover non-commercial uses of a person’s likeness (such as, for example, those videos in the New Jersey high school), the law may have broadened liability too far, to include “uses of a person’s name, photograph, likeness, or voice in news reporting, as well as documentaries, films, and books.”

A FINAL THOUGHT…

While the No AI FRAUD Act addresses certain specific (and increasingly urgent) concerns about generative AI, it’s far from a comprehensive attempt at regulating AI.

Though it may be tempting to compare this bill to the European Union’s AI Act, the two are quite different. While the EU’s bill – which is in the final stages of becoming law – is a wide-ranging attempt at regulating the development and use of AI throughout society, the No AI FRAUD Act is very narrow in scope, attempting to fix a single issue with AI, albeit a major one.

The EU AI Act seeks to set rules governing how AI is developed, and how governments and businesses can and can’t deploy the technology, all of it with respect to how the technology can affect individuals. The No AI FRAUD Act does no such thing.

In fact, it’s becoming quite noticeable that the US is falling behind many other jurisdictions in terms of developing policies around AI. There are some efforts afoot – Congress held hearings on the matter last summer, and the US Copyright Office is drafting a policy on AI and copyright – but compared to the EU’s comprehensive legislation, and China’s multiple edicts governing AI development and use, the US’s efforts seem positively lackluster.

Given the seemingly endless gridlock in Congress, and the fact that the US is moving into a particularly divisive election season, this is cause for some alarm. One would think that the country that is leading the world in developing and marketing AI would also lead the world in developing policies surrounding it, but that’s not the case.

In the absence of leadership from Washington, the US’s policy on AI may end up being determined by the courts, through the various cases winding their way through the legal system. That’s hardly an ideal situation.